Visualizing High-Dimensional Sentence Embeddings in Python: A Complete Guide (2026)

Master the art of visualizing high-dimensional sentence embeddings with this comprehensive Python guide. Learn dimensionality reduction using PCA and t-SNE.

Visualizing High-Dimensional Sentence Embeddings in Python: A Complete Guide (2026)

In the world of Natural Language Processing (NLP), understanding the semantic relationships between sentences can be greatly enhanced by visualizing high-dimensional sentence embeddings. Transformer models like BERT and mBART produce embeddings that encapsulate semantic meaning in vector spaces. However, these vectors are often in high dimensions, which makes direct visualization challenging. This guide will walk you through the process of reducing the dimensionality of these embeddings and visualizing them in 2D or 3D using Python.

Key Takeaways

- Learn how to reduce high-dimensional embeddings to 2D/3D.

- Visualize sentence embeddings using popular libraries in Python.

- Understand the significance of semantic similarity in embeddings.

- Gain insights into common techniques like PCA and t-SNE.

- Troubleshoot common errors encountered during visualization.

Visualizing sentence embeddings is crucial for gaining insights into the semantic relationships between sentences. It helps in understanding how sentences are clustered based on their meanings, which can be particularly useful in applications like sentiment analysis, topic modeling, and more. By the end of this tutorial, you'll be equipped with the skills to visualize your own sentence embeddings and make data-driven decisions based on these insights.

Prerequisites

- Basic understanding of Python programming.

- Familiarity with libraries such as NumPy, Matplotlib, and scikit-learn.

- Pre-installed Python environment (Python 3.8+ recommended).

- Basic knowledge of how transformer models like BERT work.

Step 1: Encode Sentences Using a Transformer Model

The first step in visualizing sentence embeddings is to encode your sentences using a transformer model like BERT or mBART. These models transform sentences into high-dimensional vectors.

from transformers import BertTokenizer, BertModel

import torch

# Load pre-trained model tokenizer (vocabulary)

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

# Encode sentences

sentences = [

"I love machine learning",

"Deep learning is amazing",

"The cat is sleeping",

"Dogs are very loyal"

]

encoded_inputs = tokenizer(sentences, padding=True, truncation=True, return_tensors="pt")

# Load pre-trained model (weights)

model = BertModel.from_pretrained('bert-base-uncased')

# Forward pass, get hidden states

with torch.no_grad():

outputs = model(**encoded_inputs)

sentence_embeddings = outputs.last_hidden_state.mean(dim=1)

Here, we use the BERT model to obtain a 768-dimensional vector for each sentence. The mean(dim=1) operation averages the token embeddings to get a single sentence embedding.

Step 2: Dimensionality Reduction

Since visualizing in 768 dimensions isn't feasible, we need to reduce the dimensionality. Two popular techniques for dimensionality reduction are Principal Component Analysis (PCA) and t-distributed Stochastic Neighbor Embedding (t-SNE).

Using PCA

PCA is a linear dimensionality reduction technique that projects data onto a lower-dimensional space.

from sklearn.decomposition import PCA

pca = PCA(n_components=2)

embeddings_2d = pca.fit_transform(sentence_embeddings)

PCA is fast and efficient for reducing dimensions, but it may not capture non-linear structures in the data.

Using t-SNE

t-SNE is a non-linear technique that is particularly effective for visualizing high-dimensional data in 2D or 3D.

from sklearn.manifold import TSNE

tsne = TSNE(n_components=2, random_state=42)

embeddings_2d_tsne = tsne.fit_transform(sentence_embeddings)

t-SNE provides a more intuitive visualization by maintaining the local structure of the data, but it can be computationally expensive.

Step 3: Visualize the Embeddings

Once dimensionality is reduced, the embeddings can be visualized using Matplotlib.

import matplotlib.pyplot as plt

# Plotting PCA

plt.figure(figsize=(8, 6))

plt.scatter(embeddings_2d[:, 0], embeddings_2d[:, 1], c='blue')

for i, sentence in enumerate(sentences):

plt.annotate(sentence, (embeddings_2d[i, 0], embeddings_2d[i, 1]))

plt.title('PCA of Sentence Embeddings')

plt.xlabel('PCA 1')

plt.ylabel('PCA 2')

plt.show()

# Plotting t-SNE

plt.figure(figsize=(8, 6))

plt.scatter(embeddings_2d_tsne[:, 0], embeddings_2d_tsne[:, 1], c='red')

for i, sentence in enumerate(sentences):

plt.annotate(sentence, (embeddings_2d_tsne[i, 0], embeddings_2d_tsne[i, 1]))

plt.title('t-SNE of Sentence Embeddings')

plt.xlabel('t-SNE 1')

plt.ylabel('t-SNE 2')

plt.show()

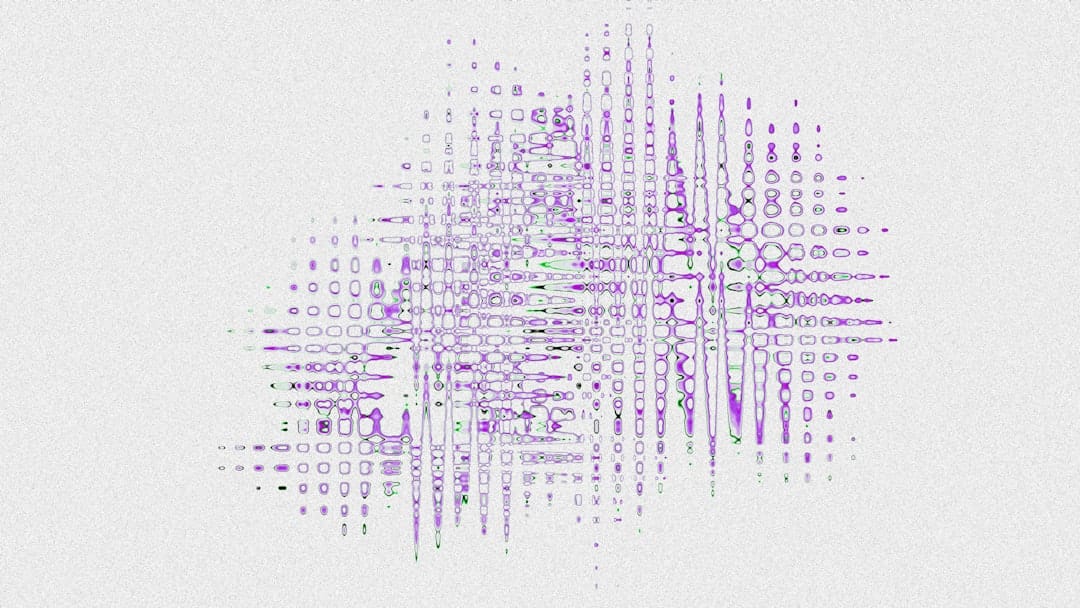

The scatter plots above reveal how sentences are semantically related based on their proximity in the plot.

Common Errors/Troubleshooting

- Model Loading Issues: Ensure you have the right version of the transformers library and a stable internet connection to download model weights.

- Memory Errors: t-SNE can be memory-intensive. If you encounter memory issues, consider reducing the dataset size or using dimensionality reduction like PCA before applying t-SNE.

- Annotation Overlap: If annotations on the plot overlap, adjust the plot size or use the

adjust_textlibrary to minimize overlap.

Conclusion

Visualizing high-dimensional sentence embeddings in 2D or 3D can significantly enhance your understanding of semantic relationships within text data. By reducing dimensionality using PCA or t-SNE and visualizing with tools like Matplotlib, you can gain valuable insights into the structure and meaning of your data. This guide provides a step-by-step approach to achieving this in Python, ensuring you can apply these techniques to a wide range of NLP tasks.

Frequently Asked Questions

How does dimensionality reduction help in visualization?

Dimensionality reduction techniques like PCA and t-SNE help in transforming high-dimensional data into 2D or 3D while preserving important patterns, making it easier to visualize and understand the data.

Why use t-SNE over PCA for visualizing embeddings?

t-SNE is preferred for capturing non-linear relationships and local structures in high-dimensional data, providing a more intuitive visualization compared to PCA, which is linear and may not capture complex patterns.

What are some common issues when visualizing embeddings?

Common issues include memory constraints with t-SNE, overlapping annotations in plots, and model loading errors. These can often be addressed by optimizing data size, plot configuration, and ensuring correct library versions.